How to Add a Custom robots.txt in WordPress (2026)

Table of Contents

The first time I broke a site’s crawling, it happened in seconds. I was cleaning up “bot noise,” pasted one line into the robots.txt file, hit save, and went back to work. Later that day, pages started dropping out of search like books falling off a shelf. That experience highlighted how vital controlling indexing is for SEO optimization.

That little file matters because it sits at your front door. Managing search engine crawlers is vital for protecting your crawl budget. When you set up a custom robots.txt WordPress setup the right way, you can guide them toward what you want indexed and away from the stuff that wastes crawl budget.

In this guide, I’ll show you how I check what WordPress is serving, how I decide between a plugin and a real file, and a few safe robots.txt templates you can copy and paste.

robots.txt basics (and the trap that gets people)

A robots.txt file gives crawlers rules for what they may crawl. It’s a public robots.txt file at:

https://yourdomain.com/robots.txt

A few important truths keep you out of trouble:

- robots.txt is not security. Anyone can still visit a blocked URL in a browser.

- robots.txt is not a guarantee. Search engine crawlers follow it; malicious bots may ignore it.

- robots.txt is not the same as noindex. This is the big one.

When you Disallow a page, Google may still index the URL if it finds links to it, but it might show up without content (because Google couldn’t crawl it). If your goal is “do not show this page in search,” you usually want a noindex meta tag (or an HTTP header) to prevent indexing, while still allowing crawling long enough for Google to see the noindex.

If you block a URL in robots.txt, Google may not be able to see your noindex tag on that URL.

Also, WordPress often serves a virtual robots.txt. In other words, even if you never created a file, WordPress can output rules at /robots.txt. Some hosts also add their own behavior (for example, staging sites often ship with blocked indexing). Pressable has a helpful explanation of how hosting and staging can affect robots rules in their guide to Pressable sites and robots.txt.

Step 1: Check what WordPress is serving right now

Before I change anything, I open /robots.txt in an incognito window and read it like a contract. I’m looking for three things:

- Is it a “virtual robots.txt” or a real file? If you see a simple set of defaults, WordPress might be generating it.

- Is a WordPress plugin overriding it? WordPress plugins can output a virtual robots.txt, and some can edit robots.txt in a physical file too.

- Is there anything scary like

Disallow: /? That line blocks the entire site.

If you’re using a WordPress SEO plugin, keep in mind it may override the virtual output. That’s common with the popular tools listed in SmartWP’s roundup of best WordPress SEO plugins like Yoast and Rank Math. Even if you don’t change robots.txt inside the plugin, it can still influence what’s served.

If you use the Yoast SEO plugin, their help doc on the robots.txt file in Yoast SEO is worth skimming, because it explains the “virtual robots.txt” behavior and when you need server access. You should also verify your rules in Google Search Console to ensure no errors exist.

One more quick check I do: I confirm I’m editing the live site. Staging and production get mixed up more than anyone wants to admit.

Step 2: Choose how you’ll add a custom robots.txt (plugin vs real file)

There are two reliable ways to set up a custom robots.txt in WordPress. I use this rule: if I want quick edits and my plugin handles it cleanly, I use the plugin. If I want total control (or a plugin can’t write the file), I create a real robots.txt in the site root.

Here’s the quick comparison I keep in my head:

| Method | Where it lives | Best for | Watch out for |

|---|---|---|---|

| Plugin-managed (virtual) | Generated output at /robots.txt | Fast edits via WordPress dashboard, no FTP access | Another plugin can override it |

Physical robots.txt file | Server web root (root directory) | Full server level control, predictable | Requires FTP access, wrong path breaks it, caching can hide changes |

Option A: Edit robots.txt with an SEO plugin (virtual robots.txt)

This is the “I want it done in five minutes” route, handled directly via the WordPress dashboard. The exact menu changes between plugins and versions, but the flow is usually the same:

- Open your SEO plugin settings.

- Find the robots.txt editor (if offered).

- Save your rules.

- Re-open

yourdomain.com/robots.txtand confirm the output changed.

If your plugin says it can’t edit robots.txt, that usually means one of two things: a physical robots.txt already exists, or your server permissions block writes.

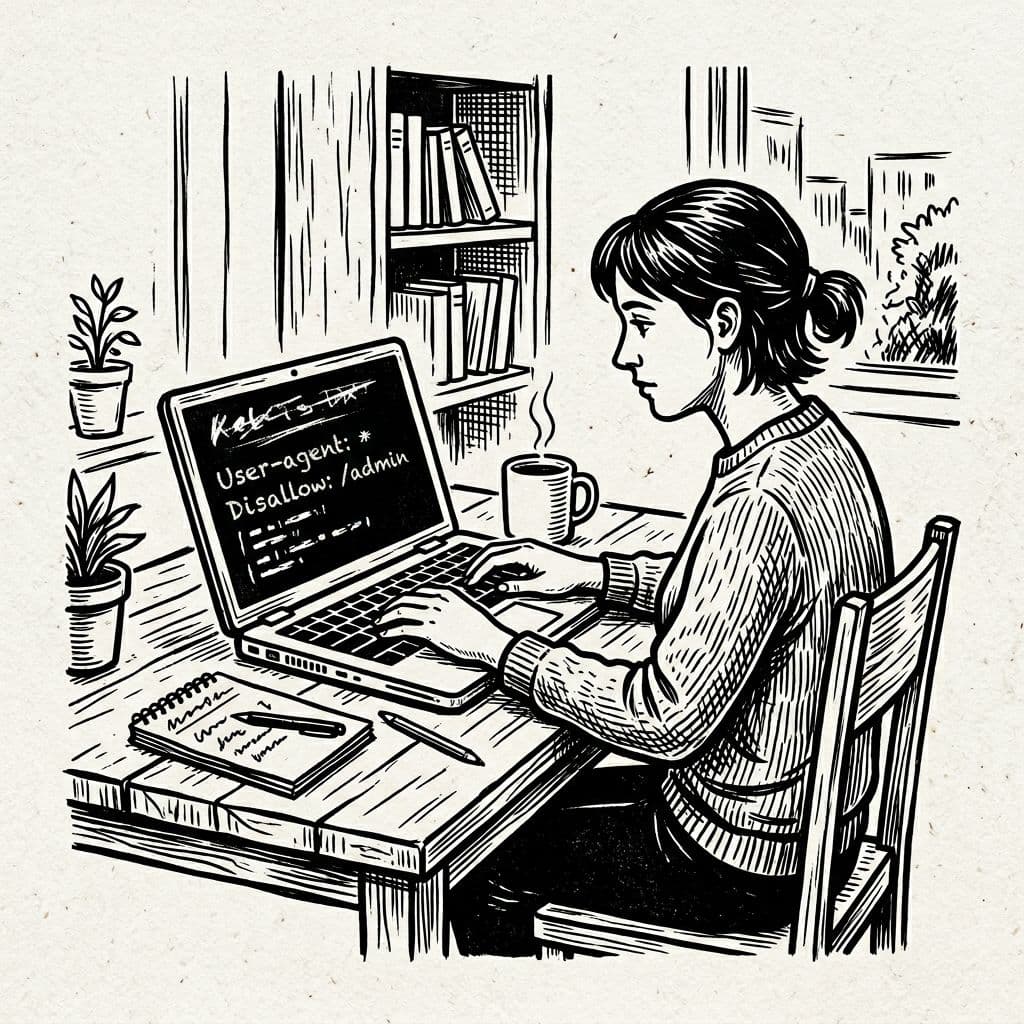

Option B: Edit robots.txt via FTP access or cPanel (physical file)

When I need a physical file for server level control, I create a plain text file named robots.txt using a text editor and upload it to the root directory (the same folder that contains wp-config.php and wp-content). As an alternative to external tools, you can use the file editor within a plugin.

Then I:

- Visit

/robots.txtand hard refresh. - Purge any caching layer (plugin cache, host cache, and CDN cache).

- Confirm the file I uploaded is what the browser shows.

Warning:

Disallow: /underUser-agent: *blocks your whole site. I only use it on staging domains.

Copy-paste robots.txt rules examples (with plain-English explanations)

Example 1: A safe “default-plus-sitemap” robots.txt rule

Use this when you want sensible blocking for wp-admin folder and a clear sitemap pointer.

User-agent: *

Disallow: /wp-admin/

Allow: /wp-admin/admin-ajax.php

Sitemap: https://example.com/sitemap_index.xml

What each directive does:

User-agent: *targets all crawlers.Disallow: /wp-admin/uses the disallow directive to keep bots out of the wp-admin folder.Allow: /wp-admin/admin-ajax.phpapplies the allow directive to let required AJAX requests through.Sitemap:tells crawlers where your XML sitemap lives (replace with your real sitemap URL). This sitemap URL helps search engines find and index your pages efficiently.

Example 2: Block internal search results (often low-value pages)

I add this when WordPress search pages get indexed and clutter results.

User-agent: *

Disallow: /?s=

Disallow: /search/

Why it helps: site search URLs can create duplicate content pages. Blocking them with the disallow directive reduces crawler time spent on thin content.

Example 3: Staging site “do not crawl” file (use carefully)

This is the one I use on staging or temporary dev domains only.

User-agent: *

Disallow: /

What it does: The user-agent line combined with the disallow directive blocks all crawling. Perfect for staging, disastrous on production.

Example 4: Block AI crawlers to protect content

Use these robots.txt rules to stop AI training bots from scraping your site. A crawl delay is sometimes used for specific bots to control their pace.

User-agent: GPTBot

Disallow: /

User-agent: ClaudeBot

Disallow: /

User-agent: *

Crawl-delay: 10

What it does: The user-agent targets specific AI crawlers with the disallow directive to block access and protect your content. The crawl delay line slows down other bots when needed.

A quick note on removing something from Google

If a page is already indexed, robots.txt is not the cleanest removal tool. In many cases, I allow crawling and add noindex, then wait for recrawl. After that, I tighten robots rules if I still need them.

Conclusion

A custom robots.txt in WordPress is like putting clear signs on doors in your building. The best robots.txt rules are short, readable, and hard to misinterpret. Start by checking your current /robots.txt, decide whether a plugin, a physical file, or the functions.php file for virtual robots.txt output fits your setup, then use a conservative template and test it on the live URL. The goal is maintaining search engine visibility and overall SEO optimization. When in doubt, protect crawl access first and avoid creating crawler traps, because one bad line can inadvertently hide your best content and silence your whole site.